Brady Forrest writes about Milemeter, a new insurance startup. First, the words "insurance" and "startup" seem to form an oxymoron. But I don't see them listed, so I read on.

An interesting aspect of insurance to me is that the "product" is completely information-based (most of my career has been in software for the design and manufacture of tangible, electronic products; I currently work in the insurance industry). Unlike some other information-based products in financial services, though, insurance seems to be more heavily regulated, and the period between events is long (days, weeks, months). The most successful sale of a product (i.e. no claims, no changes to the coverage) has one significant event a year, (re-)issue, and some minor billing/payment events.

Compare this to financial portfolios, which can be wide ranging and nearly unregulated (cf. the current sub-prime crisis and obscure bundling as supposedly secure instruments), and the period between trading events can be measured in sub-seconds and/or distributed around worldwide markets.

On the one hand there does not seem to be a lot of pressure to change the way information technology works in insurance. On the other hand all these aspects seem to open up new opportunities for change.

Brady observes...

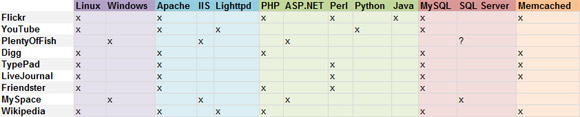

As you may have guessed, they are built on AWS (you can see a video discussing their usage on their blog). They are also using Ruby on Rails with Postgres...The internet will sooner or later affect all these industries. (Amazingly much of the current B2B transactions in insurance takes place over proprietary networks, that is, when they are automated at all.)This is what I want to see, large, black-box industries being taken down and made consumer-friendly. (Can the health system please be next?) I don't really know what I pay for with my current insurance, but with Milemeter I'll have a much better understanding.

The established insurance IT has to get its cost of change significantly lower. The best way to do this is to copy the way software is developed for the internet. As Milemeter demonstrates, this will come from the "outside" whether or not the "inside" is ready for it.

I had a chance to visit with Steve Loughran and some of his local friends, when Steve was in Oregon last week. We had a good talk about all these changes, where they are trending, and which kinds of organizations are doing what along those trend lines.

There is no doubt the cost of change is the limiting factor in established organizations from following those trends as aggressively as possible. The opportunities are there and the pressure to change will increase.

Chris Gay of Milemeter notes in the video, linked above, "Amazon Web Services is a pay-as-you-go infrastructure and Milemeter is a pay-as-you-go insurance provider". The ability to use nimble infrastructure(s) will aid the product itself to remain nimble.